Student - Research Image Recognition Technology

Scenario

Frank is a computer science student who is studying image recognition technology.

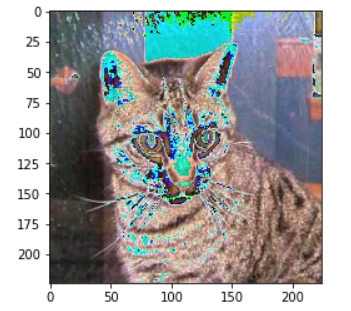

He wants to train a deep learning model that can recognize different breeds of cats, but the configuration of his personal computer cannot meet the requirements for large-scale model training.

1. Register and create an instance.

After registering an account on NiceGPU, Frank used 1 NUC to create an instance configured with 4xNVIDIA RTX 4090 cards and used the PyTorch application template.

- What is NCU?

- 1 NCU is equivalent to the computational power of one A100 GPU that you can use continuously for 24 hours on our platform.

- Examples of usage scenarios and corresponding task volumes for NCU:

- Small-scale machine learning model training:

- Task: Train a simple image classification model (such as cat-dog classification).

- Required NCU: 0.1-0.5 NCU. Small models have low computational resource requirements, and a small amount of NCU can meet these needs.

- Basic natural language processing tasks:

- Task: Conduct basic NLP tasks such as text classification and sentiment analysis.

- Required NCU: 0.2-1 NCU. These tasks have moderate computational resource demands and are suitable for students to experiment and explore.

- Data preprocessing and feature engineering:

- Task: Clean, transform, and extract features from large-scale datasets.

- Required NCU: 0.5-2 NCU. Data preprocessing is an important part of machine learning and requires significant computational resources.

- Factors affecting NCU requirements:

- Model complexity: The larger the model parameters, the greater the computational load, and the more NCU required.

- Dataset size: The larger the dataset, the longer the training time, and the more NCU required.

- Training accuracy: Higher training accuracy typically requires more training iterations, thereby consuming more computational resources.

- Model optimization algorithms: Different optimization algorithms have varying demands for computational resources.

2. Upload dataset

Frank uploaded a dataset containing 100,000 cat images to the cloud storage of the PyTorch application instance, with the path /data/cat_images。

3. Write training code

import torch

import torchvision

class MyDataset(Dataset):

def __init__(self, data_path:str, train=True, transform=None):

self.data_path = data_path

self.train_flag = train

if transform is None:

self.transform = transforms.Compose(

[

transforms.Resize(size = (224,224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)),

])

... (training code)

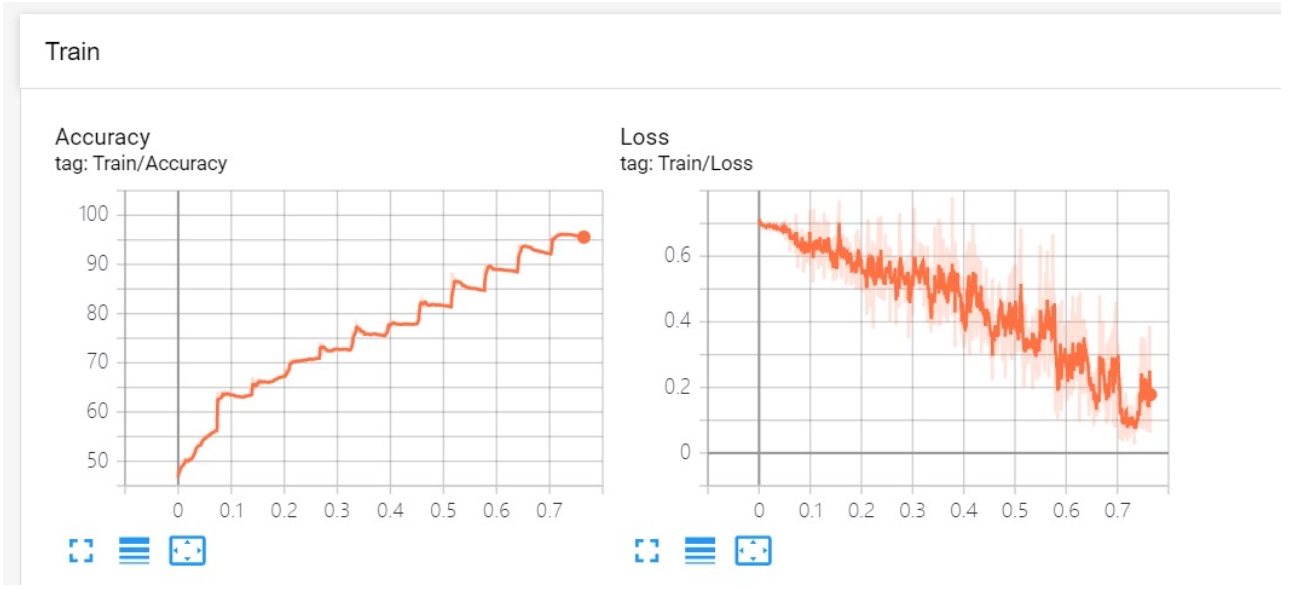

4. Monitor the training process.

Frank monitored the training process through the platform's Web interface, viewing real-time training loss and accuracy curves.

5. Summary

Shared computing platforms provide students with powerful computational resources, allowing them to focus on algorithm development and model optimization without worrying about hardware limitations.