AI Enthusiast - Explore ChatGLM

Scenario

Frank is an AI enthusiast passionate about deep learning. He hopes to explore various AI models, from image generation to natural language processing, without being limited by his own computer configuration.

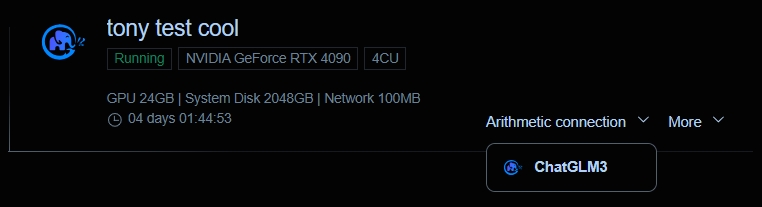

1. Register and create an instance.

After registering an account on NiceGPU, Frank used 2 NCU to create an instance configured with 1xNVIDIA RTX 4090 card and used the ChatGLM application template.

- What is NCU?

- 1 NCU is equivalent to the computational power of one A100 GPU that you can use continuously for 24 hours on our platform.

- Examples of usage scenarios and corresponding task volumes for NCU:

- Medium-scale neural network training:

- Task: Train a medium-sized computer vision model (such as object detection).

- Required NCU: 2-5 NCU. Medium-sized models have higher computational resource requirements and need more NCU to accelerate training.

- Generative model experiments:

- Task: Train generative models such as GANs and VAEs to generate images, text, etc.

- Required NCU: 3-8 NCU. Generative models typically require larger models and extensive training data, demanding significant computational resources.

- Reinforcement learning algorithm experiments:

- Task: Train reinforcement learning agents to solve various control problems.

- Required NCU: 2-10 NCU. Reinforcement learning algorithms usually require extensive experimentation and iterations, demanding substantial computational resources.

- Factors affecting NCU requirements:

- Model complexity: The larger the model parameters, the greater the computational load, and the more NCU required.

- Dataset size: The larger the dataset, the longer the training time, and the more NCU required.

- Training accuracy: Higher training accuracy typically requires more training iterations, thereby consuming more computational resources.

- Model optimization algorithms: Different optimization algorithms have varying demands for computational resources.

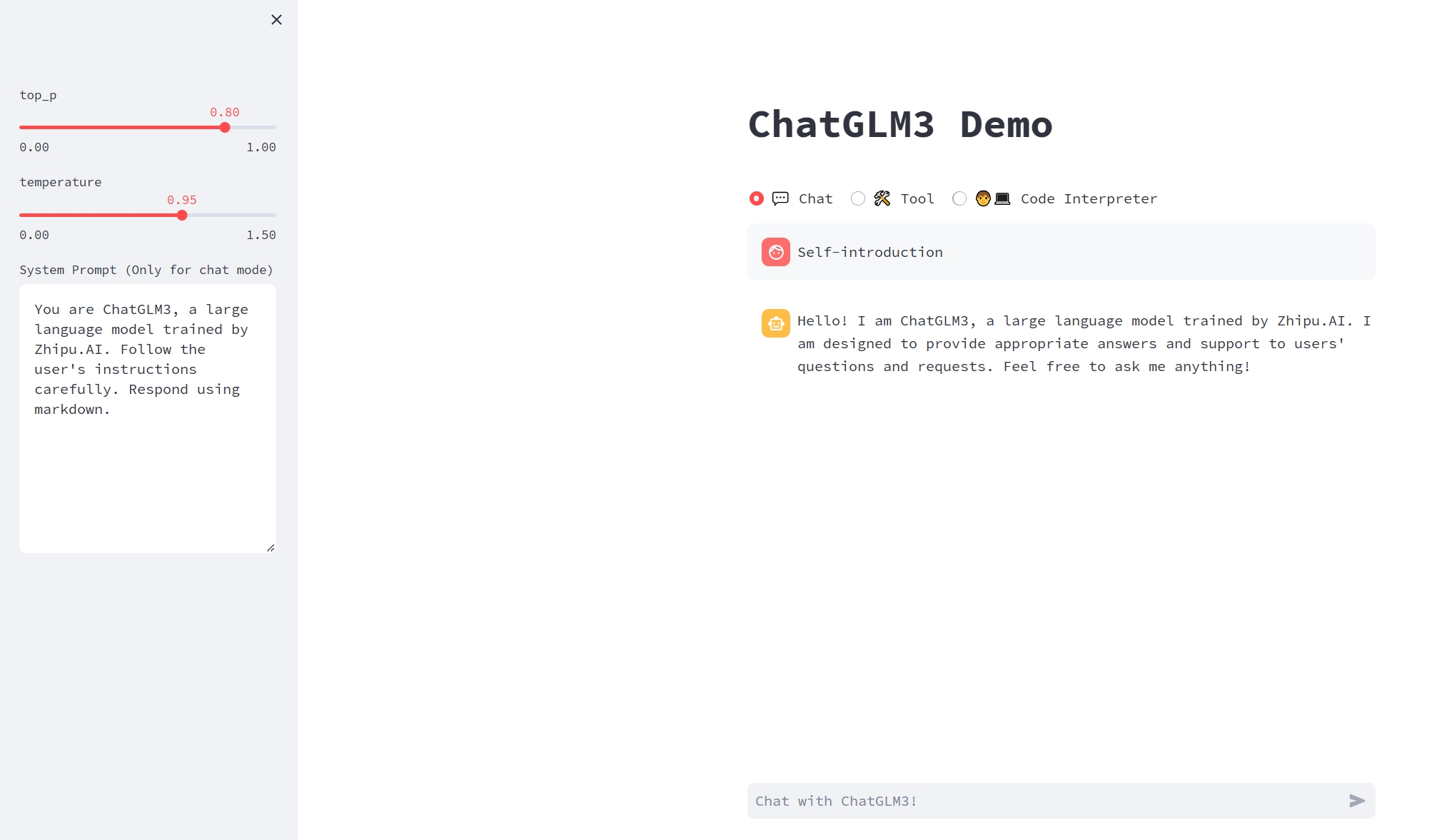

2. Used the model.

Frank can directly interact with the ChatGLM model running in the cloud through the computing power interface provided by the platform, inputting questions and receiving answers.

3. Summary

By leveraging the shared computing power platform, Frank successfully deployed the large language model ChatGLM to the cloud and achieved interaction with the model. This allowed him to explore and apply advanced natural language processing technology more conveniently.